AMD Athlon X2 5000 X2 5000+ 2.2 GHz Dual-Core CPU Processor ADO500BIAA5DO ADO5000IAA5DO ADO5000IAA5DU Socket AM2+ - Buy cheap in an online store with delivery: price comparison, specifications, photos and customer reviews

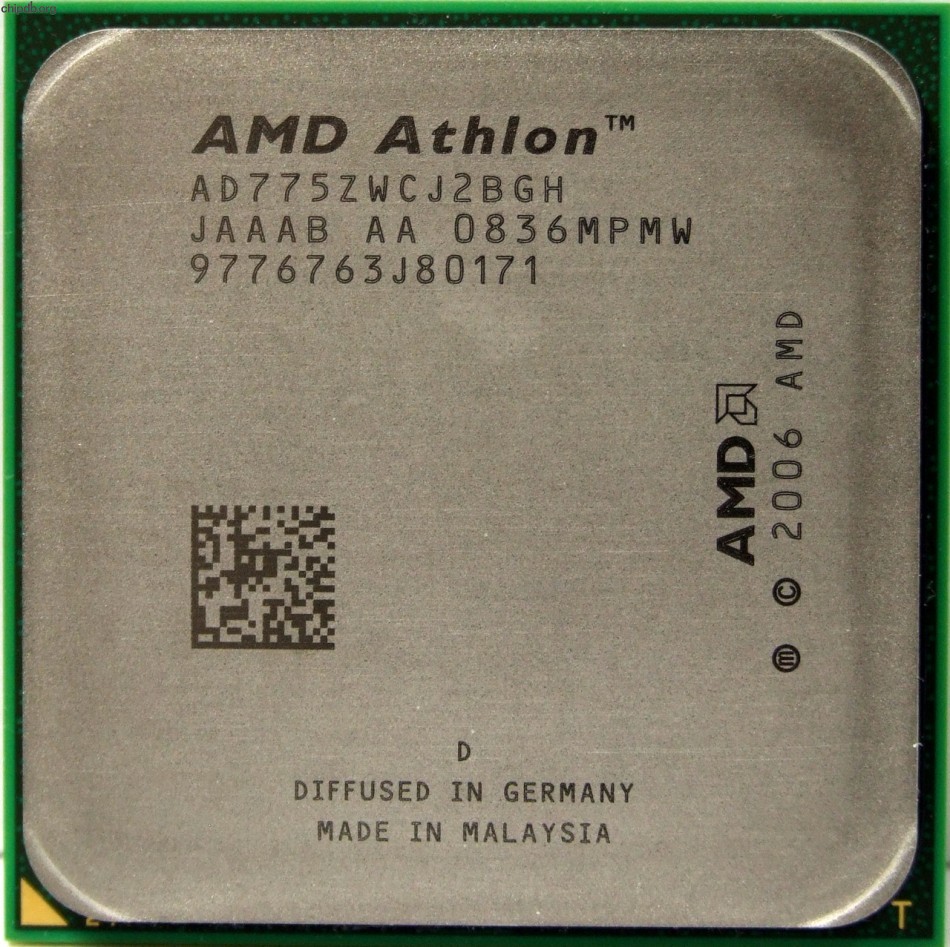

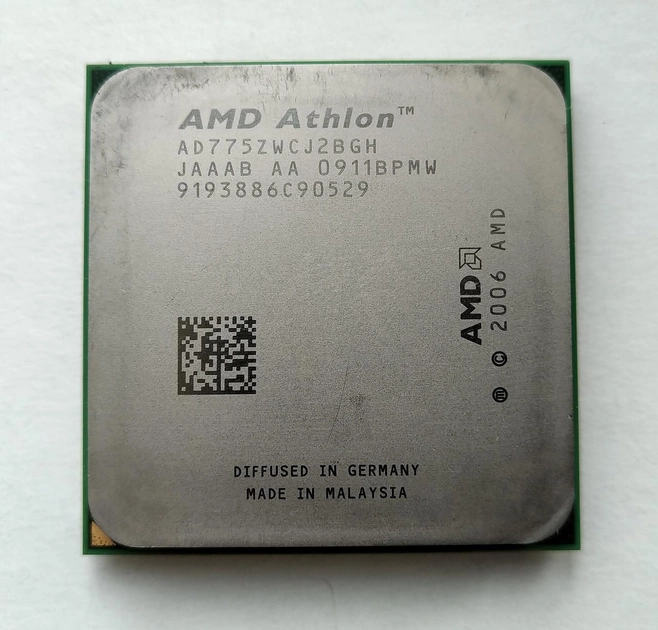

AMD Athlon X2 7750 (AD775ZWCJ2BGH) CPU Processor 1000 MHz 2.7GHz Socket AM2+ 95W 844750005881 | eBay

AMD Athlon X2 7750 X2 775Z 2.7 GHz Dual Core CPU Processor AD7750WCJ2BGH/ AD775ZWCJ2BGH Socket AM2+|socket am2|athlon x2cpu processor - AliExpress

ROZETKA | Процессор AMD Athlon X2 7750 Black Edition 2,7GHz sAM2+ Tray ( AD775ZWCJ2BGH) Kuma Б/У. Цена, купить Процессор AMD Athlon X2 7750 Black Edition 2,7GHz sAM2+ Tray (AD775ZWCJ2BGH) Kuma Б/У в Киеве,

AMD Athlon Processor 2.7GHz (AD775ZWCJ2BGH), Computers & Tech, Parts & Accessories, Laptop Bags & Sleeves on Carousell